- Home /

- Resources /

- Learning center /

- What is Collocatio...

What is Collocation?

It's the original scalable data center solution!

What is collocation? And why do half the people spell it "colocation", the other half "collocation", and everyone seems to care so much about it?

We really aren't going to spend any serious time on the grammar question. Entertaining as it all is, "religious battles" about technology terms is a very longstanding tradition. We wouldn't want to pick sides, as we are quite practical. And fortunately, this one is much tamer than spaces-vs-tabs and vi-vs-emacs.

For fun, we will use co-location, colocation and collocation interchangeably throughout this article.

So, let's get to the meat of the question: what is collocation?

When companies first started using technology stacks, they hosted their own equipment. Every company had its "server room", although for some it was the closet at the back, while others likely remember with embarrassment their critical servers under the IT manager's desk.

Either way, you needed someplace to store your servers, and so you put them in the server room or "comms cabinet". You also needed to connect them to the Internet, so you ran a cable to the server room, which led to your Internet Service Provider. It needed to be a wide enough pipe (bandwidth) for you busiest traffic, so you paid quite a lot for it.

Of course, it isn't just you doing this; so is every other company!

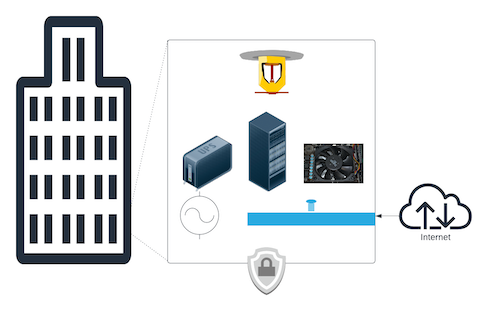

Over time, as the number of servers grew, the power and cooling requirements grew as well, as did the Internet requirements. Further, because the heat generated, there was a real risk of fire if the wrong material (paper or cardboard) was stored nearby. Finally, the value of these servers - not just the equipment itself, which was very expensive, but the data on those servers - grew, leaving them in a simple room or closet with limited protection became a risk too great to accept, not to mention the insurance companies who didn't like the idea of ensuring all of that gear in easily accessible places.

So, companies started building "data centers" - purpose-built facilities with power and cooling and security and monitoring. Many companies started building them in their own buildings, but over time, the cost of building and maintaining these facilities became a burden. Every one of them needed similar - expensive - equipment: raised floors, cooling systems, power systems, conduits for fibre-optic, links to the Internet, we can keep going. Plus, all of it needed to be redundant. Not to mention compliant with local code in each location. Add in backup power supply: UPS units, backup generators, fuel supply. That is a lot of space and expense!

Which brings us to... chairs*.

Almost no one makes their own chairs, unless they are a carpenter or enjoy the hobby. All of the equipment to shape and sand and paint it, ventilation and cleaning, fire codes, sourcing materials, the list goes in. Which is why you buy chairs from a chair store.

Similarly, very very few companies have their own electricity generators. We rely on utilities to provide it for us, as running our own coal plant, oil generator or gas turbine is a little much and incredibly inefficient (which may be a little different with solar).

Companies looked at the expenses and realized that they were becoming experts on everything completely unrelated to their businesses, and very expensive things to boot.

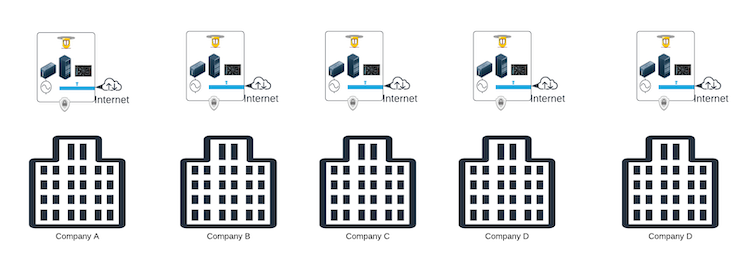

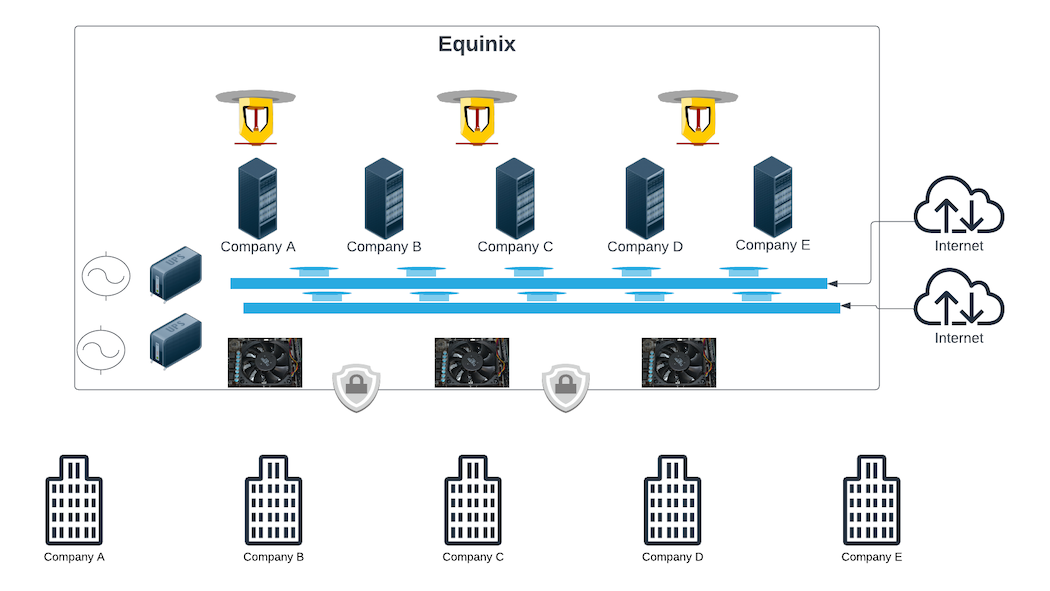

At the same time, other companies began to realize that there was an opportunity to offer "hosting your servers as a service". Of course, this was years before Software-as-a-Service, SaaS, became the hot technology, and so no one called it "hosting as a service", but that is just what it was. Companies like Exodus and Cable & Wireless, and a little bit later, Equinix, came along and said, "let us provide you with a place to put your servers. We will ensure physical security, and power and cooling and redundancy and all of that. You just need to tell us what physical setup you want and what connections to provide."

This was a great plan! Companies could focus on their core business, and let someone else worry about the common services at scale.

And so, collocation was born. Companies would "locate" their servers in a data center managed by someone else, along with - or co-located with - other companies.

In collocation, you can do any combination you want. If you prefer, have three servers you own in a rack managed by the data center. Or, if this works better for you, have your own rack. If you need higher security and privacy, put up one or more of your own racks inside a cage that is all yours.

You are not limited as to what equipment you can put in the data center, subject to power, cooling and regulatory approval (we are pretty sure they don't approve of your deploying a fission reactor in your cage).

The common theme to all of this is, the colocation provider manages:

- the physical plant

- network services

- power

- cooling

You own and manage servers and equipment, and everything between them.

Many colocation providers, including Equinix, offer some sort of "hourly physical access", or "smart hands". Rather than having to travel to Ashburn, VA, or Slough, UK, just to install a server or add a cross-connect, they will do it for you at a standard hourly rate.

How does all this matter to cloud?

Each level of this process has been about evolving from managing lower-level basic operating services to outsourcing them to a common provider, and then focusing on the next level up. As companies matured, most realized that they didn't need to be experts in power, cooling, uninterruptible power supplies (UPS), backup generators, fire suppression, physical security, etc, and so they outsourced those to a colocation provider.

Of course, some companies very much need to be experts in those areas, and so they are the ones who will build their own buildings and handle all of the above.

All this works great.

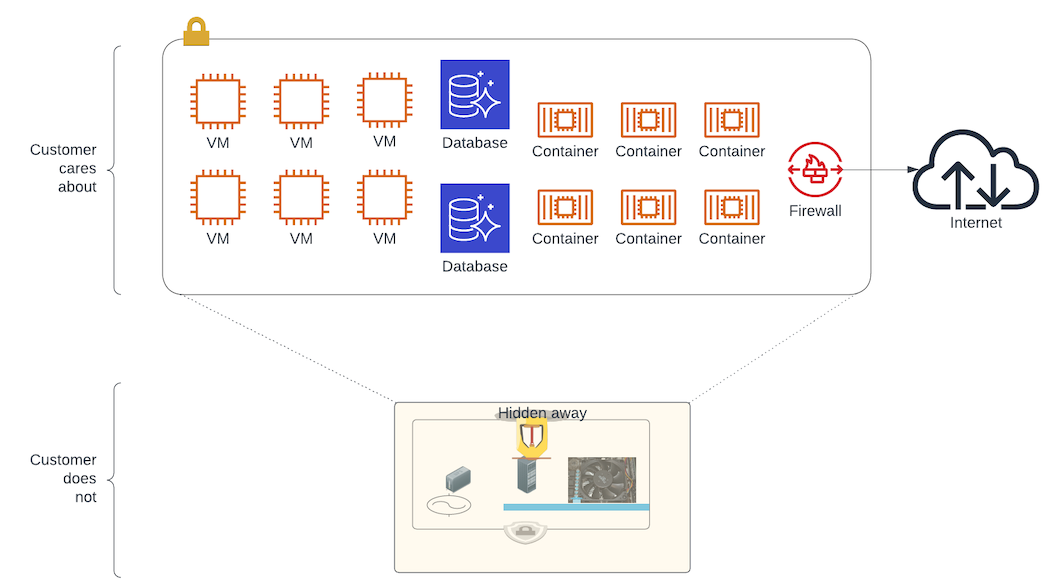

Over time, some companies began to realize that what they are running in colocation cages and racks isn't really very different from what every other company is. They just need someplace to run their processes, store their data and access the Internet. Server physical specifications, rack sizes and layouts, cages, cross-connects, these are all just details to them. If only they could outsource those, too, and just get a physical or virtual machine, network connectivity and storage on demand, perhaps by the hour or the month.

Sound familiar?

Welcome to cloud.

Cloud is the next step in the evolution of colocation. It is the next level of abstraction, where you don't need to worry about the things that matter in collocation. If your requirements are generic enough, then cloud is perfect for you. If you need specialized hardware or detailed control over layout, power, cooling and network connects, then colocation, like Equinix, is tailored to your needs. And if you are right in the middle, where you don't need all of the lower-level details, but you do need your processes to run right on the metal, then Equinix Metal is the perfect solution for you.

- Image licensed under Creative Commons Attribution 4.0; original.

You may also like

Dig deeper into similar topics in our archives

Configuring BGP with BIRD 2 on Equinix Metal

Set up BGP on your Equinix Metal server using BIRD 2, including IP configuration, installation, and neighbor setup to ensure robust routing capabilities between your server and the Equinix M...

Configuring BGP with FRR on an Equinix Metal Server

Establish a robust BGP configuration on your Equinix Metal server using FRR, including setting up network interfaces, installing and configuring FRR software, and ensuring secure and efficie...

Crosscloud VPN with WireGuard

Learn to establish secure VPN connections across cloud environments using WireGuard, including detailed setups for site-to-site tunnels and VPN gateways with NAT on Equinix Metal, enhancing...

Deploy Your First Server

Learn the essentials of deploying your first server with Equinix Metal. Set up your project & SSH keys, provision a server and connect it to the internet.

Ready to kick the tires?

Use code DEPLOYNOW for $300 credit